diff --git a/.gitattributes b/.gitattributes

index f23f37e..dacca79 100644

--- a/.gitattributes

+++ b/.gitattributes

@@ -45,3 +45,5 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

*.wasm filter=lfs diff=lfs merge=lfs -text

*.zst filter=lfs diff=lfs merge=lfs -text

*tfevents* filter=lfs diff=lfs merge=lfs -text

+

+notebook.ipynb filter=lfs diff=lfs merge=lfs -text

\ No newline at end of file

diff --git a/README.md b/README.md

index b29421f..dc38374 100644

--- a/README.md

+++ b/README.md

@@ -1,5 +1,14 @@

---

license: mit

+language:

+- en

+- zh

+base_model:

+- zai-org/GLM-4-9B-0414

+pipeline_tag: image-text-to-text

+library_name: transformers

+tags:

+- reasoning

---

# GLM-4.1V-9B-Thinking

@@ -8,45 +17,56 @@ license: mit

- 📖 查看 GLM-4.1V-9B-Thinking 论文 。

+ 📖 View the GLM-4.1V-9B-Thinking paper.

- 📍 在 智谱大模型开放平台 使用 GLM-4.1V-9B-Thinking 的API服务。

+ 📍 Using GLM-4.1V-9B-Thinking API at Zhipu Foundation Model Open Platform

-## 模型介绍

-视觉语言大模型(VLM)已经成为智能系统的关键基石。随着真实世界的智能任务越来越复杂,VLM模型也亟需在基本的多模态感知之外,

-逐渐增强复杂任务中的推理能力,提升自身的准确性、全面性和智能化程度,使得复杂问题解决、长上下文理解、多模态智能体等智能任务成为可能。

+## Model Introduction

-基于 [GLM-4-9B-0414](https://github.com/zai-org/GLM-4) 基座模型,我们推出新版VLM开源模型 **GLM-4.1V-9B-Thinking**

-,引入思考范式,通过课程采样强化学习 RLCS(Reinforcement Learning with Curriculum Sampling)全面提升模型能力,

-达到 10B 参数级别的视觉语言模型的最强性能,在18个榜单任务中持平甚至超过8倍参数量的 Qwen-2.5-VL-72B。

-我们同步开源基座模型 **GLM-4.1V-9B-Base**,希望能够帮助更多研究者探索视觉语言模型的能力边界。

+Vision-Language Models (VLMs) have become foundational components of intelligent systems. As real-world AI tasks grow

+increasingly complex, VLMs must evolve beyond basic multimodal perception to enhance their reasoning capabilities in

+complex tasks. This involves improving accuracy, comprehensiveness, and intelligence, enabling applications such as

+complex problem solving, long-context understanding, and multimodal agents.

+

+Based on the [GLM-4-9B-0414](https://github.com/zai-org/GLM-4) foundation model, we present the new open-source VLM model

+**GLM-4.1V-9B-Thinking**, designed to explore the upper limits of reasoning in vision-language models. By introducing

+a "thinking paradigm" and leveraging reinforcement learning, the model significantly enhances its capabilities. It

+achieves state-of-the-art performance among 10B-parameter VLMs, matching or even surpassing the 72B-parameter

+Qwen-2.5-VL-72B on 18 benchmark tasks. We are also open-sourcing the base model GLM-4.1V-9B-Base to

+support further research into the boundaries of VLM capabilities.

-与上一代的 CogVLM2 及 GLM-4V 系列模型相比,**GLM-4.1V-Thinking** 有如下改进:

+Compared to the previous generation models CogVLM2 and the GLM-4V series, **GLM-4.1V-Thinking** offers the

+following improvements:

-1. 系列中首个推理模型,不仅仅停留在数学领域,在多个子领域均达到世界前列的水平。

-2. 支持 **64k** 上下长度。

-3. 支持**任意长宽比**和高达 **4k** 的图像分辨率。

-4. 提供支持**中英文双语**的开源模型版本。

+1. The first reasoning-focused model in the series, achieving world-leading performance not only in mathematics but also

+ across various sub-domains.

+2. Supports **64k** context length.

+3. Handles **arbitrary aspect ratios** and up to **4K** image resolution.

+4. Provides an open-source version supporting both **Chinese and English bilingual** usage.

-## 榜单信息

+## Benchmark Performance

-GLM-4.1V-9B-Thinking 通过引入「思维链」(Chain-of-Thought)推理机制,在回答准确性、内容丰富度与可解释性方面,

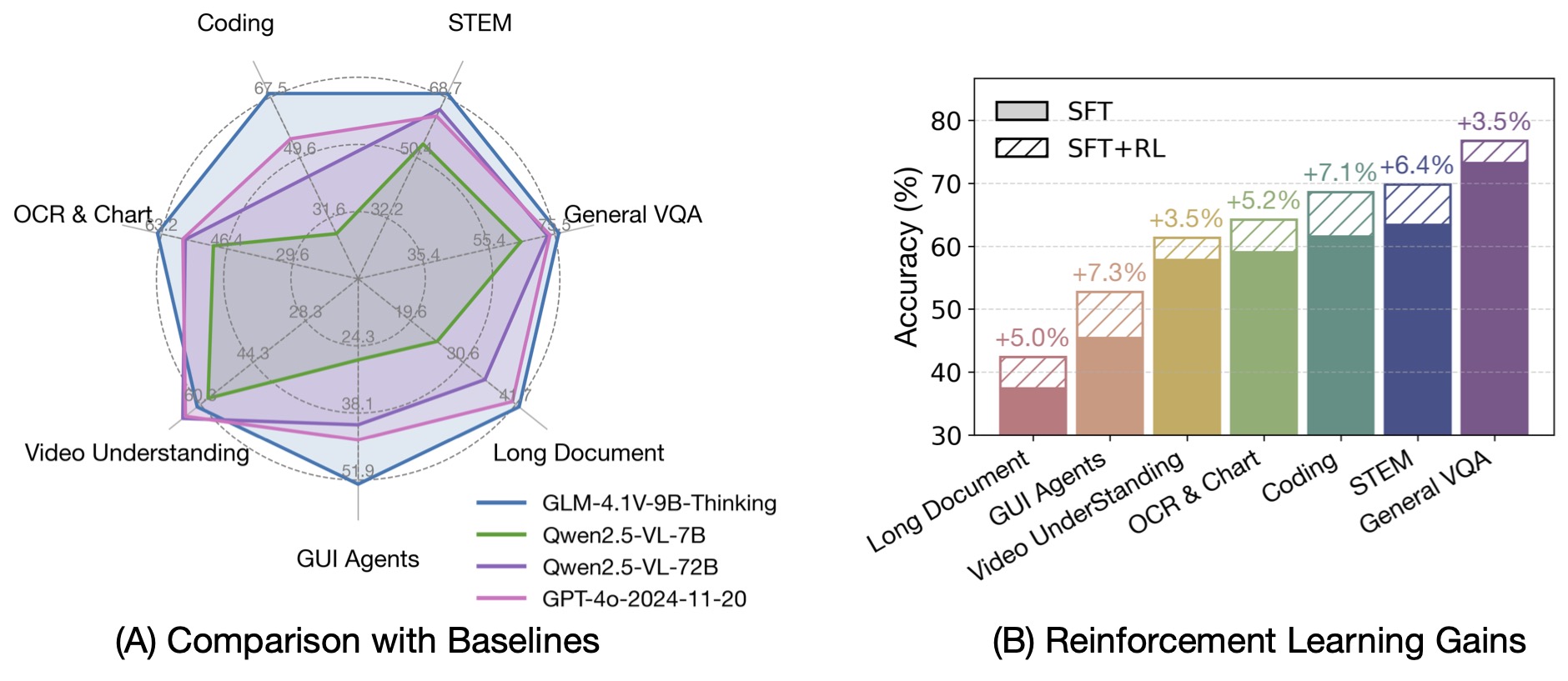

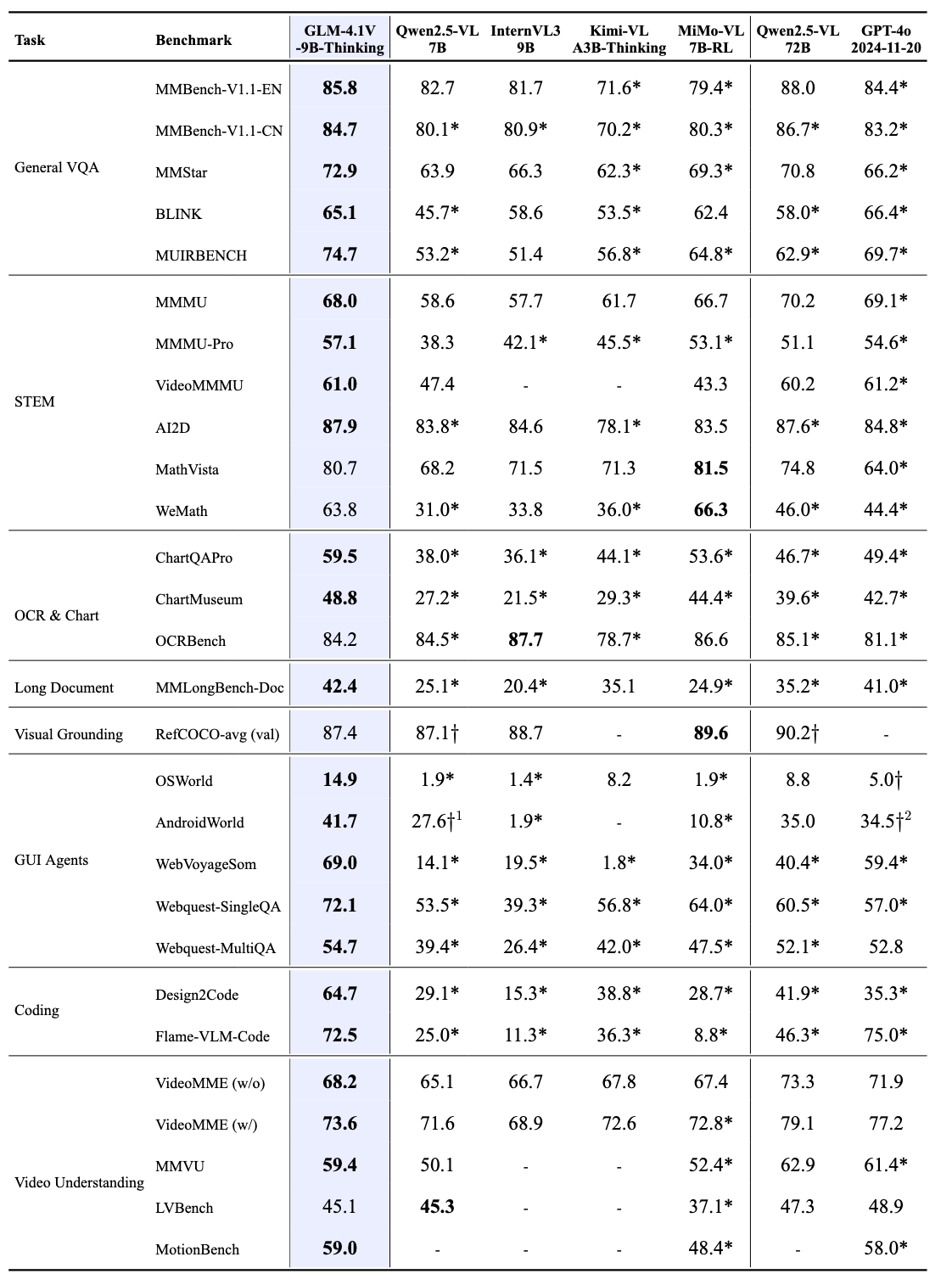

-全面超越传统的非推理式视觉模型。在28项评测任务中有23项达到10B级别模型最佳,甚至有18项任务超过8倍参数量的Qwen-2.5-VL-72B。

+By incorporating the Chain-of-Thought reasoning paradigm, GLM-4.1V-9B-Thinking significantly improves answer accuracy,

+richness, and interpretability. It comprehensively surpasses traditional non-reasoning visual models.

+Out of 28 benchmark tasks, it achieved the best performance among 10B-level models on 23 tasks,

+and even outperformed the 72B-parameter Qwen-2.5-VL-72B on 18 tasks.

-## 快速推理

+## Quick Inference

+

+This is a simple example of running single-image inference using the `transformers` library.

+First, install the `transformers` library from source:

-这里展现了一个使用`transformers`进行单张图片推理的代码。首先,从源代码安装`transformers`库。

```

pip install transformers>=4.57.1

```

-接着按照以下代码运行:

+Then, run the following code:

```python

from transformers import AutoProcessor, Glm4vForConditionalGeneration

@@ -59,7 +79,7 @@ messages = [

"content": [

{

"type": "image",

- "url": "https://model-demo.oss-cn-hangzhou.aliyuncs.com/Grayscale_8bits_palette_sample_image.png"

+ "url": "https://upload.wikimedia.org/wikipedia/commons/f/fa/Grayscale_8bits_palette_sample_image.png"

},

{

"type": "text",

@@ -68,13 +88,12 @@ messages = [

],

}

]

+processor = AutoProcessor.from_pretrained(MODEL_PATH, use_fast=True)

model = Glm4vForConditionalGeneration.from_pretrained(

pretrained_model_name_or_path=MODEL_PATH,

torch_dtype=torch.bfloat16,

device_map="auto",

)

-processor = AutoProcessor.from_pretrained(MODEL_PATH, use_fast=True)

-

inputs = processor.apply_chat_template(

messages,

tokenize=True,

@@ -87,6 +106,5 @@ output_text = processor.decode(generated_ids[0][inputs["input_ids"].shape[1]:],

print(output_text)

```

-

-视频推理,网页端Demo部署等更代码请查看我们的 [github](https://github.com/zai-org/GLM-V)。

-

+For video reasoning, web demo deployment, and more code, please check

+our [GitHub](https://github.com/zai-org/GLM-V).

\ No newline at end of file

diff --git a/notebook.ipynb b/notebook.ipynb

new file mode 100644

index 0000000..eb9cc9a

--- /dev/null

+++ b/notebook.ipynb

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:4e773310cc43f99e534f54b458655bc6f70c439304ac11b6624edec476b64df1

+size 2537101